It’s been a week since the xz backdoor dropped. I stand by my earlier conclusion that the community really dodged a bullet, and this is reaffirmed by many other well-respected voices in the community. Since this incident is likely going to go down in history as a watershed moment in OSS supply chain security (for better or for worse), I’ve been keeping an eye on different insights into the issue, the state of OSS security, what we can do (as a community) to avoid this in the future. I’ve seen some great advice, some questionable advice, and since the backdoor has generated so much news, we’ve unfortunately had armchair security experts giving some downright crazy advice.

xz backDoor Discovery—The Recap

What was revealed last week made everyone rightly concerned about not only what “Jia Tan” committed to xz, but what they committed to other repositories. This past week saw a flurry of scrutiny in libarchive, oss-fuzz, and other repositories where “Jia Tan” made changes. It is still unclear if these other commits were malicious or not; some are headscratchers and some have at least a bit of code smell surrounding them. I applaud and appreciate the maintainers of these repositories being diligent this week to verify each change, and if unsure about the nature of a change, to just revert the change out of an abundance of caution.

There have been some fantastic comprehensive timelines posted covering what “Jia Tan” and their cadre of sock puppet accounts did over the past three years to develop this backdoor; the patience and planning in pulling this off is simply astonishing.

One of the key takeaways from looking at how this backdoor was integrated into xz is to understand that most reviewers would dismiss the backdoor changes as “configure script testing junk” and that most of the mechanism for inserting the backdoor was hidden behind changes that had plausible commit descriptions. But the thing that kept lingering in the back of my mind, a thought echoed by many of my peers is … “What about the backdoors we don’t know about?”

How Bad Is It?

When you start to think about all the projects out there that might have been owned in a similar way, it’s quite understandable to be worried. At this point (and granted, the pendulum has fully swung into full paranoia mode for some organizations), can you legitimately trust any piece of software that you or your organization didn’t write yourself? And as a software development organization, what do you do moving forward? How much can you actually trust upstream dependencies after it’s been proven that this sort of thing can happen, and more importantly, has happened?

Neither I nor anyone else can predict what aftershocks this event will have on development practices moving forward, but I do believe that the way software is built now won’t basically change. Sure, we’ll see some knee-jerk over-rotations to this, and maybe some organizations will institute more thorough reviews before importing OSS modules, but asking companies to suddenly not use third-party dependencies anymore because they might have been backdoored years ago is going to fundamentally break the way software is developed today. I also believe, pessimistically, that after the dust settles on this incident, after organizations have instituted more thorough vetting, the bad actors will just find another way to game the system.

As I said earlier, I’ve seen some pretty bad takes about this backdoor in the past week. And to be honest, I think a lot of “experts” are missing the point I made in my last post. The enabling factor for this backdoor was the fact that distribution maintainers linked “all the things” into sshd. If that hadn’t happened, we wouldn’t be having this conversation right now. Suggestions like “all you needed here is a VPN” or “all you needed here was portknocking” or “all you needed here was one sshd behind another sshd” just simply fail to understand the problem; that sshd in the vulnerable cases was flat out built incorrectly. It’s almost as if everyone just thinks “well there is nothing I can do about the way sshd is built so I guess I have to defend myself in other ways”.

While I may personally think that is defeatist, I have to accept that my customers simply don’t have time to be pressuring Debian and RedHat to change how they build their distributions. My customers are in the business of delivering software to their own customers, and at some point they need to be able to trust the components they are using, whether base OS/container image dependencies, or code modules they imported during build. This fact, combined with what has happened this past week, is why I believe runtime observability is so important, now more than ever.

How Do We Move Forward?

Given the infinite number of third-party components out there, we just don’t have time to scan them all. Something is going to slip through the cracks of the review process. Scanning every repository’s code won’t help; the xz backdoor wasn’t really even in code, so unless someone writes a sandbox for m4 and configure/autoconf scripts, and tries to figure out what every command is doing and if it’s malicious or not (narrator: this is impossible), there is always going to be things that don’t get noticed.

But all is not lost.

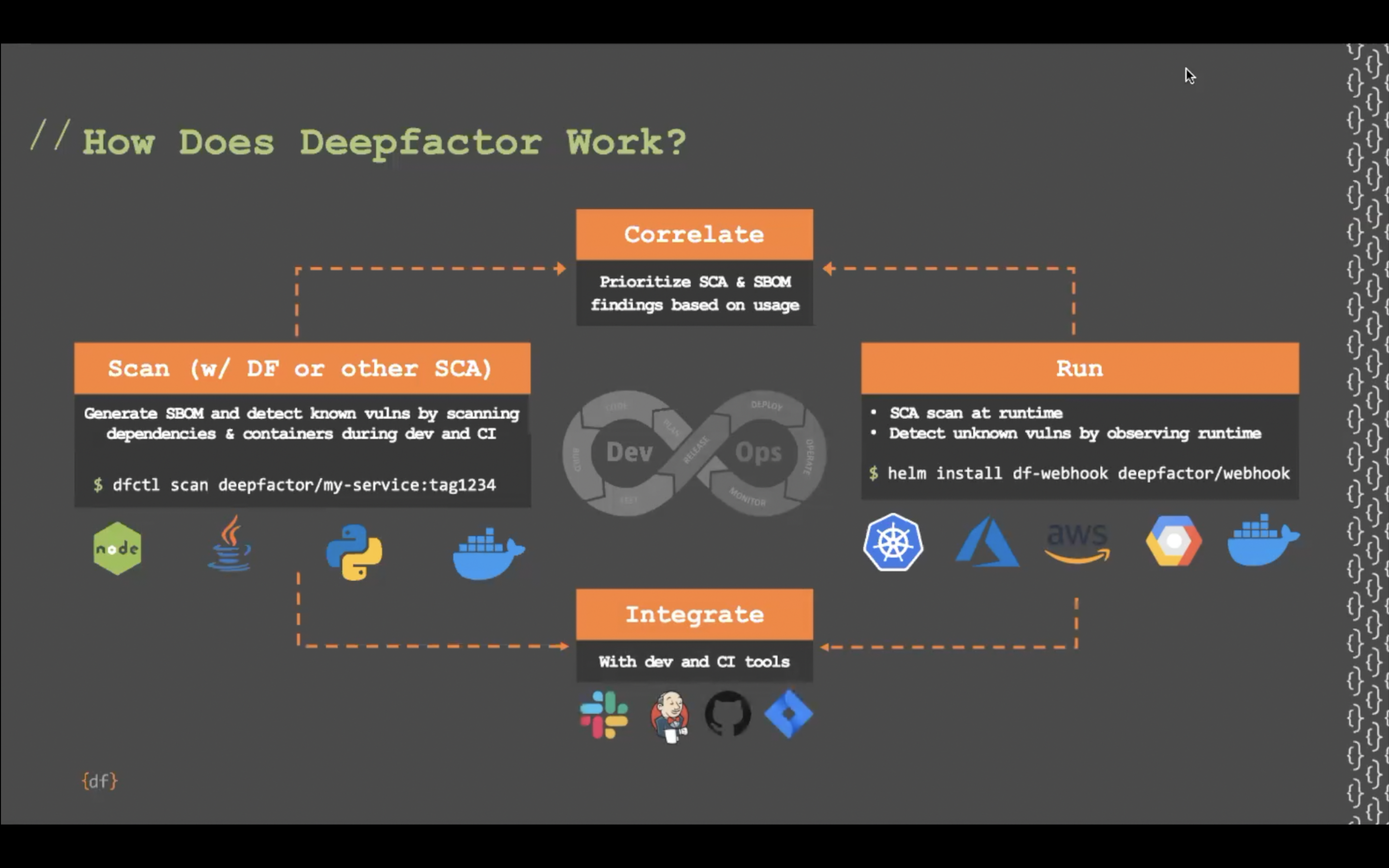

One of the things we do very well at Deepfactor is monitoring what the application does at runtime. Thus, we don’t even look at the code that was used to produce the thing you’re running. If you have the code, that’s great, and we can give you even more insights, but the code is not needed to do what we do. And if we see something the application is doing that typical applications don’t do (and yes, you get to configure what that means if you want), it’s an instant red flag. And then you get to decide what gets done about that.

For example, let’s say the xz backdoor wasn’t found last week and that everyone got infected. We now know what the backdoor does; it uses system() to run whatever command the attacker embedded into their SSH handshake. You’d never find this in a code scan; the code was far too obfuscated. The only way to know if the application was compromised would be to actually see the system() API being called, at the exact moment it was called. And that’s what we do.

While I personally dislike system(), it does have its uses. However, if it’s not something your application should be calling, we tell you that. We notice this behavior and tell you that the application has violated its policy, so that you can investigate what is going on. We also provide a full stack trace of the execution leading up to the call, so you know what dependencies are involved. So, if someone had used the xz backdoor to exploit your application, you would know based on the aberrant behaviors it suddenly started exhibiting.

Now, take what I just said there and multiply the protection we provide by the number of libraries or third-party dependencies in your application, and then multiply that again by the number of container image/OS packages installed. Hopefully you are beginning to realize the scope of just what may be out there lurking in any of those things I mentioned. What you need is a safety net, a way of providing defense in depth, and that’s why we have put so much effort into what Deepfactor offers.

Another Experiment

If you are a company that deploys software in containers, then you need to choose a container image upon which to base your application. That container image needs to contain all the things required for your application to run. If you are a company that develops monolithic VM-based applications, then you need to make similar decisions about what is required, and what distributions you support. If you choose wisely and thin out your container images like I suggested in my blog earlier this week, then you are ahead of probably 95% of other organizations out there. Unfortunately, more often than not, I see examples of applications built on fat images or fat VMs. This leaves a lot of area for unknown backdoors. To see how bad things were, I pretended that I was a developer of a Java application and created a container image using the following distribution base images plus the JDK/JRE. This gives a reasonable baseline for the minimum number of support packages required to run Java applications.

This table shows the number of packages installed in each case.

| Distribution | # of base packages | # of packages added for OpenJDK | Total |

| alpine:latest | 15 | 29 | 44 |

| ubuntu:latest | 102 | 40 | 142 |

| centos:7 | 148 | 38 | 186 |

| fedora:latest | 143 | 242 | 385 |

I included centos:7 because even though it is very old, we continue to see this used quite extensively in customer environments.

Even the thinnest container image still has 44 packages that, conceivably, could have something nefarious lurking in them. While 44 is an order of magnitude better than 385, the potential for malicious code is not zero. The fedora example is especially concerning; installing the headless JDK results in installation of audio codecs, bluetooth support, CUPS (for printing, because all containerized applications do that), cdparanoia (for ripping audio CDs, again from your containerized microservice), mpg123 (for playing MP3 files from your microservice), and so on.

The fedora example is indicative of the overall problem of trying to cover all business cases by linking in every possible library into things that arguably don’t need such functionality. This is exactly what happened with the xz backdoor.

Now let’s pretend you thinned your container image and reduced the number of packages to a substantially lower number, and/or used a distroless container or a scratch container, and let’s further pretend that there are zero backdoors in that image. This doesn’t even begin to address library dependencies included with your application, which aren’t included in the base image. (eg, did the JSON parsing library imported by your developer from Github contain a backdoor?) You’ve taken the steps to make your container images themselves backdoor-free, but what about the application itself? You still need a way to detect unwanted behaviors, even if you’ve taken precautions to avoid the first broad class of how backdoors might make their way into your environment. To put things in perspective, one of the sample applications we use at Deepfactor as a demonstration application has over 3,200 dependencies. Many of those aren’t even from the container image the application runs with, which means there is no amount of container scrubbing you can do to avoid malicious code in those dependencies. You need runtime security to stand a chance against what might be out there. Last week I may have had a less ominous take; today I’m not so sure.

The Good News

The good news here is that it’s not all doom and gloom out there, and with Deepfactor, you can put another safety net in place to help you with these challenges. I look forward to engaging with existing and future customers to show how we can help in these crazy times.

If you’d like to learn more about how Deepfactor can help in situations like this, contact us to connect with our team.

Free Trial Signup

The Deepfactor trial includes the full functionality of the platform, hosted in a multi-tenant environment.

Sign Up Today! >