Learn how CVSS 4.0 – the first update in eight years – will impact you and the way your organization assesses software security vulnerability severity.

Topics covered include:

– What is CVSS 4.0?

– What are some of the key enhancement and improvements in CVSS 4.0?

– How easy is it to adopt it, either replacing CVSS 3.0 or using them together?

– What are the limitations of relying just on CVSS base scores?

Transcript:

Kiran Kamity (00:05):

Welcome to the next episode of Next-Gen AppSec series. The purpose of this Next-Gen AppSec series is to have lighthearted coffee shop style conversations with the subject matter experts, practitioners, as well as executives that are truly pushing the boundaries of what’s possible with application security. Today’s topic of discussion is CVSS-IV, and we have two subject matter experts with us Vikas and Naman. Vikas, please introduce yourself.

Vikas Wadhvani (00:36):

Hello everyone. Hey, I’m Vikas Wadhvani. I’m the director of product engineering here at deepfactor, and I’ve been through the journey of progressing from AppSec one to AppSec two, and I’d love to share some of my journey with you on this LinkedIn live session.

Kiran Kamity (00:56):

Awesome. Naman.

Naman Tandon (00:59):

Hi everyone. Hi. I am Naman. I am currently based in Bangalore, and I work as a lead engineer at deepfactor, primarily focusing on the SCA and the runtime SCA correlation capabilities. So looking forward to a fruitful discussion on the 4.0 Thank you.

Kiran Kamity (01:16):

Awesome. And the purpose of Next Gen AppSec series is to make sure that we’re having an informal coffee shop style conversation. So I’m going to start with what is your favorite coffee or tea? Vikas.

Vikas Wadhvani (01:30):

So actually it’s not a coffee at all. It’s probably hibiscus or chamomile tea.

Kiran Kamity (01:36):

Awesome. Naman.

Naman Tandon (01:39):

Yeah, it’s conditional for me at times. It’s coffee at times it’s tea, but I mostly prefer tea or coffee and with all the spices added to it, cinnamon and ginger and a bunch of other things. So especially masala tea.

Kiran Kamity (01:54):

Oh, masala. Chai. The chai. Nice.

Naman Tandon (01:58):

Exactly.

Kiran Kamity (01:59):

Awesome. I have taken a liking lately to turmeric, chai. There’s some things that they’re selling in the Bay Area that I seem to have gotten hooked into, especially Peet’s Coffee has this thing called Golden Chai, which is essentially turmeric with honey and someone other things, which is my latest favorite at Peet’s.

Naman Tandon (02:18):

And you do drink it with milk or-

Kiran Kamity (02:24):

Yeah. Yeah. You have it with milk.

Naman Tandon (02:24):

Nice.

Kiran Kamity (02:25):

Or without, I guess. Awesome. So let’s get to CVSS-IV. Guys, these are the topics that we’re going to chit-chat about today.

(02:34)

What is CVSS-IV? What are some of the key enhancements and improvements that have gone into CVSS-IV compared to the previous versions? CVSS has been around since 2005. There was one, there was two, there was three. Why is there a need for four?

(02:50)

And let’s talk about what are some things that have gotten in. Now, once four becomes mainstream, sometime next year, hopefully. Is it easy to adopt? Is it going to be replacing CVSS III, and how we track those scores, or are we going to be using them together?

(03:06)

And then if you purely have to rely on just the base course, then what are the limitations of that? And I’m guessing that’s the reason why. That’s one of the reasons why we’re looking at CVSS IV as an industry. So let’s get started with that.

(03:24)

Naman. Let’s talk about what is CVSS? Give us a bit of a history. Who maintains this? Tell us about the first organization and anything you’d like to share with respect to the background behind CVSS.

Naman Tandon (03:42):

Sure Kiran. So CVSS is essentially the common Vulnerability Scoring system. And as the name suggests, it’s a common or a standard way to rate or score vulnerabilities. They’re typically indicative of the technical severity of that vulnerability. And I’m emphasizing technical severity cause a lot of people actually tend to associate it with true risk, which is questionable and debatable.

(04:05)

And we’ll get into specifics of why is it a problem. So there wasn’t a standard as such for scoring vulnerabilities. But in 2005, first, as you mentioned, which is the Forum of incident response and security teams, they formed this special interest group and they came up with this algorithm mathematical formula, which takes into account a lot of parameters that you would typically associate with the vulnerability and then feed in these parameter values, assign different rates, and come up with a score which could then indicate the severity of that vulnerability.

(04:43)

Now there have been multiple revisions since 2005. It has been almost two decades now. And they have rolled out CVSS II, III, 3.1, which was the last stable release in 2019. And if I get into the specifics of 3.1, the score primarily comprised of three aspects. So there were three categories of metrics per se, one being the base metric, the second being the temporal metric, and the third being the environmental metric.

(05:11)

So the base metric eventually results in the base score, which is now a de facto standard in almost all the organizations that have anything to do with the vulnerability management directly, indirectly, they are consuming these CVSS base scores, which are published in the public databases like NVD, the National Vulnerability database, or Valdibi GitHub Security Advisory database.

(05:35)

A bunch of them are there. And they typically talk about metrics such as what the attack vector is. Is there some sort of a user interaction required for the exploit to take place? Are the special privileges required for the attack to occur? And what’s the CIA impact? What’s the confidentiality, integrity and availability impact?

(05:55)

So a bunch of metrics which a vendor can actually analyze and they’re independent of how you’re using those packages in your applications. It’s independent of that. So a vendor would typically analyze and come up with a score and then publish it on these public databases that I mentioned. And then you will use it directly indirectly through the vulnerability management tools.

(06:14)

And the other two, lesser known, or I would say less popular categories or metrics are the environmental, the temporary, which focus on the exploitability of the vulnerability and where exactly are you running the application, what sort of an environment are you running it, which brings in more of an application context.

(06:33)

So these are supposed to be actually provided by the consumer because a vendor would not have an idea about how you’re using those packages. So we get into specifics of how 4.0 tackles these limitations, but that is essentially what CVSS says. And just to highlight that the score eventually is a score between zero to 10, 10 being the most severe and 0.1 being the least severe. That is what we eventually consume.

Kiran Kamity (06:58):

So basically the score essentially consists of three kind of metrics that make up the score. One is how severe it is, and then that’s the base. And then you have temporal and environmental, which essentially take into account the exploitability factors of it as well as what environment your application deployment and related things. But as I take it, the CVSS base score is what is generally consumed by most people today. Right.

Vikas Wadhvani (07:27):

And one more point I’d like to add is we have to commend the hard work the first org does in coming up with these scoring mechanisms and upgrading them over time. They do realize the importance of the context of the application and encourage consumers to recalculate the CVSS score. Unfortunately that’s hard and we’ll probably talk about that in the subsequent questions.

Kiran Kamity (07:56):

Awesome. Yeah, yeah. All right, so let’s talk about the improvements that have been made in CVSS version four. Why was even there and need for version four, given that you had three?

Naman Tandon (08:10):

Right. I’ll probably take that question. So before I get into specifics of what exactly has improved, let me reiterate what we just discussed, that in version 3 you have three types of metrics. As I said, the base score is what most of us consume, and often try to equate it with true risk and use it for prioritization of vulnerabilities.

(08:33)

But the nuance that gets missed is that you’re not taking into account the temporal or the exploitability metrics. And you’re also not taking into account the application context. So for instance, let’s say a CVE requires internet access for exploitation or requires rule privileges for an exploit to actually take place. But there’s a possibility that your application might not even be exposing these limitations, which means it’s less likely that your application would be exploited.

(09:03)

And that is the exact reason that CVSS 3 introduced these other metrics, which Vikas mentioned are supposed to be filled in by the consumer and then arrive at a precise port to probably maybe eventually associated with two risks. There are still limitations there, but you could eventually use that.

(09:22)

So there was some gap maybe in the documentation, maybe in the way it was marketed or advertised or consumed. And that’s the exact problem that the 4.0 version tackles first as one of the major changes. So the change that they bring in is the nomenclature change. And what that highlights is that there’s a standard way to now consume these scores. And there are four categories of scores. Now, if you can move to the next slide please.

(09:50)

So if you can see on the top, so there are four categories. Now you have a CVSS-B score, which is now being emphasized that whenever you consume any score through any vulnerability management tool, or if you’re looking at say NVD or CVE details, you would explicitly see that there’s a CVSS-B score with the value a seven or or 7.5.

(10:12)

And that rings a bell in your head that okay, I am looking at CVSS-B, specifically CVSS-B, which means there are other nuances that are probably missing in this particular score. And that will force the AppSec teams or anybody who’s looking at this data to reconsider that maybe there are other attributes that I should worry about.

(10:32)

And this score itself is not enough to arrive at the true risk possibility of that application. And that is where the other three category of scores also come into picture, which is the BT score, combining the base and threat, BE, which combines the base and environmental score and BTE, which covers the base threat and environmental score.

(10:54)

So all these three scores obviously would not be directly provided by the vendor. As we said, the vendor is not aware of the application context, but there is a possibility that vulnerability management tools might eventually give you this value and help you prioritize vulnerabilities better. But yeah, we’ll have to wait and watch.

Kiran Kamity (11:14):

Got it. So essentially-

Vikas Wadhvani (11:16):

Go ahead. Go ahead.

Kiran Kamity (11:17):

Essentially. So it seems like a lot of these parameters are kind of similar, but because people with the previous version three or 3.1, for example, the practitioners have taken for granted that CVSS essentially refers to severity alone, and they ended up naturally ignoring the other aspects of exploitability threat, environmental, et cetera. CVSS IV takes an attempt to kind of call them out specifically and brings them to the forefront.

Vikas Wadhvani (11:46):

I don’t even know if it was ignorance, it could just be lack of knowledge in some cases. I know a lot of people don’t realize that they have to recalculate CVSS score for each of the vulnerabilities that they detect.

(12:04)

And let’s say even if they did know that they have to, it’s a Herculean task. If you had a decently sized corporation, you have hundreds of artifacts, you have thousands of vulnerabilities, you would have to go to each an artifact, understand the context, go and recalculate the CVSS score. If you were to do this manually, it would be it take you months if not years.

(12:31)

And unfortunately, AppSec teams are lean, they have to handle a lot more applications than developers. But what I like about CVSS v. four, is that they’re making it very apparent to you that this is a base score alone and you need to add in thread score, environmental score to make it get the true risk, which is, CVSS-BDE, right?

(12:59)

And as I mentioned, doing that manually is going to be hard. AppSec teams need tools that provide all of these inputs that can then be plugged into the formula to get the right CVSS score. That’s true for your application, right? Yeah. That’s not a generic score. Now it’s personalized to your application.

Kiran Kamity (13:21):

And that also means that you have to go and fetch the information about your deployment mode, et cetera, to feed in to how you can actually take advantage of these scores that they need.

Naman Tandon (13:38):

And actually before we move on, you can move back a slide Kiran. There are actually some modifications as well in the base scores. So one of the problems that most of us who have handled any sort of vulnerability management tool would’ve faced is that these tools often give you the CVSS base score, as we said. We then give you the vendor severity and you’ll most probably filter by say, scores greater than seven or eight because that gives you the high and the critical severity vulnerabilities.

(14:12)

Now the problem with that is that of all the vulnerabilities that are published, almost 50, 60% of them actually fall in that bracket. So even if you are using that filter, you end up receiving a huge list of vulnerabilities that you need to take care of. And most of you have gone through that pain of going back and forth with the app set team saying that please give us a refined list.

(14:35)

This is not what we can tackle given the release work that you probably have to take care of and a bunch of other things. So that’s another pain point that actually CVSS 4.0 Tries to address by making some modifications of the base core and bring in some more granularity.

(14:53)

So an example for that is if you see the impact metrics, so the impact metrics was limited to confidentiality, integrity and availability of the vulnerable system or the system that’s under attack. And you had a separate parameter called score, which used to tell you that, okay, is there a chain of attack possible?

(15:13)

Your actual attack started from a vulnerable system, but are the subsequent systems which are affected by the change. So the metric itself was not very straightforward and the definition was vague. And that’s the exact chain that they have brought in four dot i.

(15:28)

So if you see on the right now, you have different metrics for the vulnerable system and the subsequent system, and that now brings in more granularity to the score. And same as the case with exploitability metrics. So for example, use interaction-

Vikas Wadhvani (15:44):

Just to double the color. So earlier the scope would just tell you if the subsequent subsystem can be reached. Right now what we are saying is what is the impact on the subsequent subsystem? So I can basically gain access to a vulnerable system and then I can reach a subsequent subsystem. And now CVSV-IV allows you to tell what would be the impact on the subsequent system as well, right?

Naman Tandon (16:14):

Right, exactly. And from an exploitability metric standpoint, as I said, so for example, user interaction, you had values like none or required, now you have a granularity of required being broken now into two categories.

(16:28)

Is there an active interaction required or is there a passive interaction required? Same as the case with the attack complexity. So the complexity has now been further split into two categories. So you have attack complexity, you have attack requirement. Complexity indicating what sort of security measures that you have to go through to actually have a successful exploit of that system.

(16:51)

And requirement is what sort of prep work do you need? Maybe there are some certain conflicts that you need access to before you can even actually exploit. So there are two more nuances that have been brought in so that you’ll have a more granular score.

(17:04)

There are more discrete and distinct values between zero to 10 instead of a limited set of values that we generally play around with. So that’s another major change that they have gotten.

Kiran Kamity (17:16):

Interesting. So not just handling the point where the attack lands, but also the lateral movement after that and separating them into different aspects of the metric.

Naman Tandon (17:28):

Right, exactly.

Kiran Kamity (17:30):

Right. Nice. All right, let’s talk about this slide a bit. Go on. Naman.

Naman Tandon (17:38):

So again, this is a modification. So the first one that you see, so 3.1 had a category called temporal metric, and that comprised of the exploit code maturity, remediation level, report confidence, these three metrics. It was confusing because more or less they meant the same thing. They were integrated, there was some overlap. So now they have simplified as part of 4.0, where they are now calling this metric as the threat metric, and it basically focuses on expert maturity.

(18:10)

And as the value suggests, there is a possibility that there have been attacks, recorded attacks for that particular vulnerability. Or there could be proof of concept codes or exploit codes available in the wild. So all those categories would be covered as part of the temporal metric. So it’s not a lot of change, it’s just making sure that people can consume this easily as a metric. So that’s a modification that has been done.

Kiran Kamity (18:36):

So instead of clubbing your exploit maturity as well as remediation level and confidence into one thing called a temporal score, which can’t be used, especially when you’re relying on automated next-gen SCA type tools that feed in the parameters that are required for you to make sense out of this and arrive at a number, it makes it easy if you separate the exploit piece into its own thing and then the rest of it into supplemental metrics.

Naman Tandon (19:00):

Right. And I’ll just reiterate, temporal still is a metric which the vendor won’t provide, which means by default, any score that you’re consuming is most probably still a base score and it’s not taking into account the threat metrics given the reason that the exploits obviously is a dynamic piece of information, it tends to change. You might have an exploit at some point in time and it keeps on changing basically.

Vikas Wadhvani (19:24):

Yeah, that’s exactly what I was thinking, right. It’s a hard problem to solve, even if you calculated the score, the score is going to change with time. A new exploit is going to become available. So that is going to change your risk score. That is going to change your CVSS score. That means you have to re-prioritize a vulnerability that you possibly de-prioritized. So all the more reason for automation and a tool that can help automate calculation of these new CVSS scores.

Kiran Kamity (19:53):

An interesting question here is see, the maturity of an exploit to a certain vulnerability is an external parameter that’s not dependent on a fixed score that you can give today, because tomorrow there could be something new on Metasploit and that might make it easy for someone to take advantage of it. So does that mean that the exploit maturity has to constantly keep getting refined for all of the CVs out there, which is a daunting task? No.

Vikas Wadhvani (20:18):

Yes, that’s exactly what it is. And the word also temporal also means it changes with time and that’s why it’s a hard problem. Even though FastR gives us all the flexibility, it’s hard for consumers to do this on their own without automation.

Naman Tandon (20:41):

And I think, but yeah, we should call out the effort that is been put in by First and SIG and they have constantly actually improved the methodology to come up with this score incorporating all the feedback from the community. So that’s a great job that they’re doing.

(20:58)

And it’s in fact something that we definitely use for a prioritization, maybe not the only parameter, but it’s definitely one of the parameters that we use. So that’s a great thing. And just wrapping up on this, so there are a bunch of new metrics also that have been written as part of 4.0, which are not there in 3.1, and that is the supplemental metrics.

(21:20)

So the good thing about the supplemental metrics is that the vendor is expected to provide this, but the bad thing about this is they’re optional. And the second bad thing about this is they will not be incorporated in the scores.

(21:34)

So these are independent attributes that may or may not be available through whatever databases or vulnerability management tools that you’re using. And if they’re available, you’ll have to have an explicit logic to maybe involve it in a decision tree to finally use it for your true risk.

(21:51)

And if I get into specifics, so some of them are safety, so safety actually is a new parameter that they have introduced which focuses on the impact on the environment or say what’s the impact on the human. So until now, when we talked about CIA, we referred mostly to data or say software systems.

(22:11)

But safety actually focuses on the human impact. So for instance, you have say a medical device or an automated car which is using some sort of software and you do have a vulnerability there which gets exploited that can in turn actually impact a life of a human.

(22:27)

So that’s another notion that CVSS tries to bring in as part of the change. And then you have a bunch of other things like automat able is the attack, automatable how easy the recovery is, and the value density indicates does it impact a specific set of resources or there is a lot of resource access that it gives while the system is exploited.

(22:53)

And then what sort of a response effort is required to tackle the vulnerability or the exploit. And the last one is a provider urgency, which is sort of related to vendor severity, which most of the tools today provide, but it was not incorporated in the CVSS score per se. So the provider urgency is actually supposed to indicate that.

Vikas Wadhvani (23:13):

And I guess it makes sense not to incorporate this into the score, I’m sure FastR thought about it. So all of these tend to talk about the impact of an attack. So it could be a low severity vulnerability, but what happens if it gets attacked? So I think it talks about those and this is supplemental information that will definitely help abstract teams understand the impact of the attack and how to recover from the attack.

Naman Tandon (23:51):

Right.

Kiran Kamity (23:52):

Right. Got it. All right, so I think we’ve talked about the temporal part. Let’s now switch to the environmental score metrics and talk about that.

Naman Tandon (24:03):

Sure. So from an environmental score perspective, similar to what three dot one did, it’s more or less same as what you look at the base score for, I mean whatever base metrics that we look at to arrive at a base score is essentially what environmental metric also comprises of.

(24:23)

And as you can see in terms of the abbreviation or the name, it says modified attack vector or modified attack complexity. What that means is that you have a bunch of base metrics and that is indicating the technical severity depending upon typically how that exploit could occur for a specific type of system.

(24:40)

But as we discussed, some of the vulnerabilities might require network access, but your application might not be exposed to internet or might require rule privileges, but you might not be exposed to that limitation.

(24:54)

So you are supposed to provide a feed in those values and then modify the environmental metrics. So that is exactly what this is supposed to do. As you can see in the title it says the modified base metrics.

(25:07)

So bring in the application context, bring in the context of where exactly your application is running and arrive at a more precise for which would work for you. Yeah.

Kiran Kamity (25:18):

Got it. So how are these now separated out is it seems like a lot of them are pretty much similar entries on both the left for CVSS three, one and the right.

Naman Tandon (25:35):

So the separation is, as I said, similar to what base metric did. So the changes in base metric were getting rid of the scope parameter and bringing in the context of vulnerable systems, vulnerable subsystems, and the impact on the subsequent systems.

(25:51)

So the changes are the same. The metrics are same as what you would see in the base scores. The only thing is the vendor is not going to provide you this value. It’s the consumer who is supposed to feed in this value allergy would be, say you go to a doctor and say, I have this problem and he prescribes your medicine, but if he doesn’t take into account what allergies do you have or what sort of other problems that you have and just gives you or prescribes your medicine, you are obviously not going to be benefited by that.

(26:24)

So same as the case with this, bring in the application context and then probably take the pill that you were looking for.

Kiran Kamity (26:32):

Got it. Got it. And it’s the practitioner’s responsibility to understand or use a tool for it, but basically to understand the environmental characteristics of a deployed application and then feed that back in to the CVSS score to understand the environmental part of the CVSS v. four score to understand how much of a risk this particular CD poses to you as opposed to, of course, purely relying on the base score basically.

Naman Tandon (27:09):

Exactly, exactly.

Kiran Kamity (27:10):

Right. Yeah, I mean essentially the move here is as an industry, we’re trying to move from a theoretical exercise, so to speak, that is purely based on here’s the severity fix everything that’s greater than CVSS eight, right?

(27:24)

That’s kind of what a lot of the organizations do today to a risk-based approach where it’s not just the severity because if you purely rely on just the severity, the numbers can overwhelm your developer teams.

(27:34)

So you’ve got to move to a model where you are looking at the true risk of these CEEs that exist in your application, then based on that arrive at a reduced set of alerts or vulnerabilities that you truly want your developers to go spend time fixed. Right?

Naman Tandon (27:49):

Right, right.

Kiran Kamity (27:53):

Got it. Cool. So now I know CVSS v.four work is still ongoing and a lot of the base scores are still not yet there. What are some of the timelines and then once you have these CVSS scores, is the expectation that organizations completely cut over from three to four or do we see a time where just like between two and three, between three and four, we’re going to be using both scores for the foreseeable future?

Naman Tandon (28:15):

Vikas, do you want take that?

Vikas Wadhvani (28:23):

Yeah, sure. I think it’s going to take time to get CVSS v.four scores for a lot of the vulnerabilities. We may not even get scores for some of the old vulnerabilities. So I think in the meanwhile, consumers will need to consume both CVSS, v.three and v.four. How we did when CV three came out, we used a combination if CVSS v.three is there, use that if not fall back to v.two.

(28:53)

I think my hunch is that it’ll probably take six to 12 months before we start seeing CVSS v. four scores for a lot of the new vulnerabilities as well as the prevalent vulnerabilities of today.

Naman Tandon (29:12):

And to add another point, actually, when CVSS four specification was released this November, they actually provided a bunch of examples as well to show how CVSS three and four scores would differ.

(29:24)

In a lot of cases where vulnerabilities were rated high or critical severity, the severity actually came down. But the question is that the true severity that we should be looking at? So there could be a debate in the future where a lot of vulnerabilities where the severity actually got changed and got changed, people might start questioning this change itself.

(29:46)

So there is a possibility that people or the first SIG would have to revisit some of these metrics or redefine them similar to how we saw a change from three to 3.1. You might see changes here as well. So we have a way to watch when it becomes stable and is ready for actually consumption.

Vikas Wadhvani (30:04):

And organizations will need to in some sense, re-baseline with these new scores and reprioritize which of the vulnerabilities that they should be attacking they should be fixing. But that’s a little away. I’m sure the industry will evolve, we’ll learn more over the next six to 12 months.

Kiran Kamity (30:33):

Yeah. Got it. Okay. So now let’s switch to another interesting topic because currently the way CVSS is consumed is primarily by looking at the base scores. And as I said, if you purely rely on the base scores, then we stand the risk of giving a long list of things to your developers. So let’s talk about the limitations of relying just on the base scores. Vikas.

Vikas Wadhvani (31:00):

I think we touched upon this during the talk. So I mean, first ARG itself acknowledges that base score is not enough, and they’re making it very apparent in B four by actually coming up with different acronyms for CVSS-B, CVSS-BT, CVSS-BTE, right?

(31:21)

Want to make it more and more apparent that the base score in itself is not the true risk, because without context, you cannot define the risk offer vulnerability, and unfortunately, the vendor doesn’t know the context of the application in your environment. So the vendor can’t help you there.

(31:47)

Now this puts the onus on the consumer to figure out the context, and unfortunately that’s a hard problem for absolute lean AppSec teams that have to manage so many applications. I mean, we feel for AppSec people, we speak to them on a daily basis, so we know how hard their job is, so they definitely need some sort of automation to help them.

(32:11)

But it is quite obvious that you cannot rely on the base score alone because that is in some sense, some sort of an average risk that the vendor is trying to tell you that this is the average risk.

(32:26)

That doesn’t mean much because it is that average risk has to be recalibrated to your environment. And unfortunately that has to be done by the ARG itself or a tool that that the ARG uses.

Kiran Kamity (32:40):

Yeah. Naman, you have anything to add?

Naman Tandon (32:43):

No, I think Vikas pretty much covered everything, and I think most of us, as I said, have gone through this pain of looking at the huge report with thousands of vulnerabilities to fix.

(32:51)

So unless that number comes down, I don’t think you can rely just on CVSS-based codes. And I believe it will come down if you start bringing in the context of the application, the running application and exploitability and a bunch of other things that we talked about.

Kiran Kamity (33:09):

From what I see, as you guys know, I talk to a lot of these customers across the board on a daily basis. So what I clearly see is there are three distinct personas with three distinct set of problems.

(33:21)

So if you look at dev, AppSec and leadership, the problems of relying purely on CVSS-based codes for dev is that they get overwhelmed with the number of vulnerabilities to fix. And many times it’s not even representing a real risk to the application.

(33:34)

It’s just becomes a theoretical exercise of I have a lot of vulnerabilities, I have to fix it because the CVSS is greater than eight, for example. From an AppSec practitioner perspective, because there is no other metric that is automatable, the natural inclination is to rely on CVSS scores for them to provide a list of things that they would like for the dev teams to fix.

(33:58)

So that ends up making the dev teams think of the AppSec teams as somebody that is just giving me work needlessly. So Dev stopped taking the list that the AppSec team provides them seriously enough, and many times devSecOps programs fall flat.

(34:15)

From a leadership perspective falsely the leadership teams end up tracking vulnerability counts in a graph and following that to assuming that that is an accurate representation of how the security of the application is doing, and it becomes an academic exercise and just to bring the counts down, they end up using their resources, limited dev resources, limited AppSec resources, inefficiently just to fix a whole bunch of things.

(34:40)

But if you switch the paradigm and instead of just relying on the base scores, relying on risk to the application risk being a combination of base scores, the exploit maturity and exploit availability, as well as some of the other parameters about the environment of the application such as runtime reachability or topology of the application, things like that, then you can arrive at a much smaller number of meaningful things that actually represent risk to the organization, and therefore your dev teams end up fixing what actually matters.

(35:10)

Smaller number of things, so they’re happy. AppSec team become essentially heroes because they’re the ones that are giving only the important things to the developers. And leadership teams end up tracking the risk as opposed to just account of vulnerabilities. That’s kind of what I see out there with the difference between AppSec teams that purely rely on base scores as well as compared to forward-thinking AppSec teams that are actually relying on true risk question.

Vikas Wadhvani (35:39):

I completely agree. That was well put.

Kiran Kamity (35:42):

Yeah, thanks. So the holy grail is if we can arrive at something like this to the AppSec teams, so instead of just telling them, here’s a total vulnerable severe severity in this case for this application is 387 things that are vulnerable that have greater than 387 greater than CVSS.

(36:02)

If you can do a couple more drill-downs on it and say which ones are actually reaching and used by your application, we’ve observed your application run for in-depth test and prod for a certain baseline period, and we notice that these are the important reasonable things, and then out of them, a small subset is actually reachable, used and have exploits available, et cetera.

(36:24)

That will help you as an AppSec team kind of tell your developers say, look, don’t worry about 387, first. Worry about these two first because that’s the highest risk. Once you address that, then go down to your 72 and then go fix the remaining 387 if you have time. So that would make for a more mature AppSec organization. Right.

(36:50)

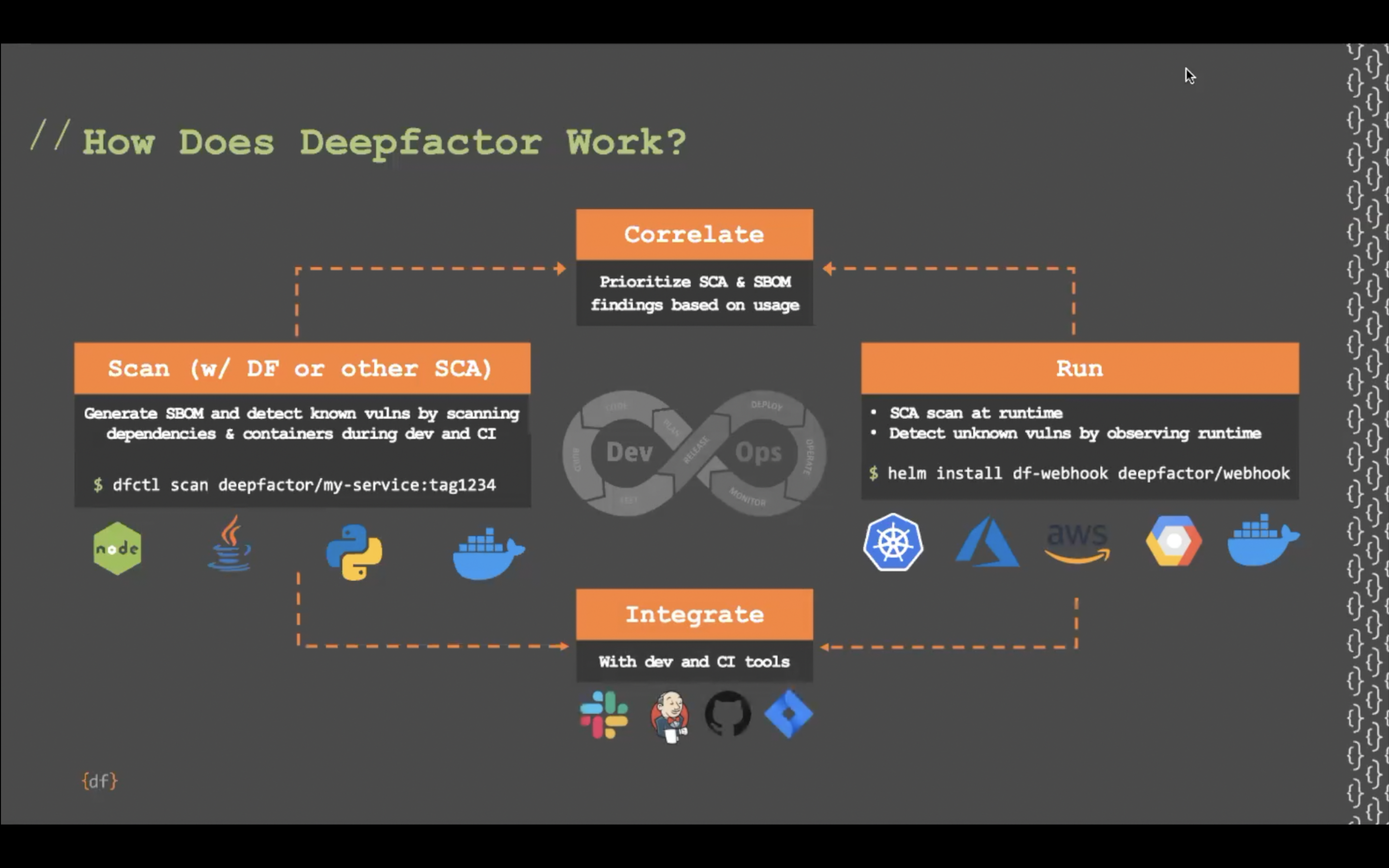

Cool. And I know at deepfactor, we’ve come up with these six parameters that will actually help us arrive at that triangle. So the triangle that I showed in the previous slide is the holy grail, but in order to arrive at that triangle, you’ve got to make sure that you consider all of these six parameters.

(37:07)

The biggest bang for buck is the component that is being the resource in question, is that actually being used by your application or not? And runtime usage is the primary thing, and we see is an extremely important thing that’ll actually go hand in hand with runtime usage. Reachability, there’s a couple of different approaches that we’re seeing in the market.

(37:31)

Some tools are using call graph based reachability approaches. Some tools like the deepfactor are using runtime based approaches to reachability. Of course, runtime reachability is the holy grail that everybody wants to get to because simply relying on call graphs has a whole bunch of blind spots around not seeing certain libraries that are being loaded in your base container images or components that get dynamically loaded.

(37:52)

You’re prioritizing dead code even though it’s reachable as an important thing, even though it never gets called by your application, so on and so forth. So anyway, pick your reachability tool, figure out which approach makes sense for your organization.

(38:06)

Exploit availability is another metric, including both exploit availability as well as is there a POC for the exploit? Is there an active exploit that has happened, so on and so forth.

(38:17)

Topology of your application, do you have internet accessible components, severity, CVSS as well as vendor of science scores, CVSS base score. Now that you’ve educated me on the CVSS four metrics, and of course the applicability, is it applicable to the operating system that is sitting in my container? Is it applicable to the Java runtime that I’m using for my application, et cetera.

(38:38)

So if you can factor in these six metrics, you’ll be able to arrive at the triangle that I’ve showed you in the previous slide and therefore improve the maturity of the AppSec process around triaging and remediation workflows. I know you guys have seen me pitch this slide a few times. Anything you’d like to add?

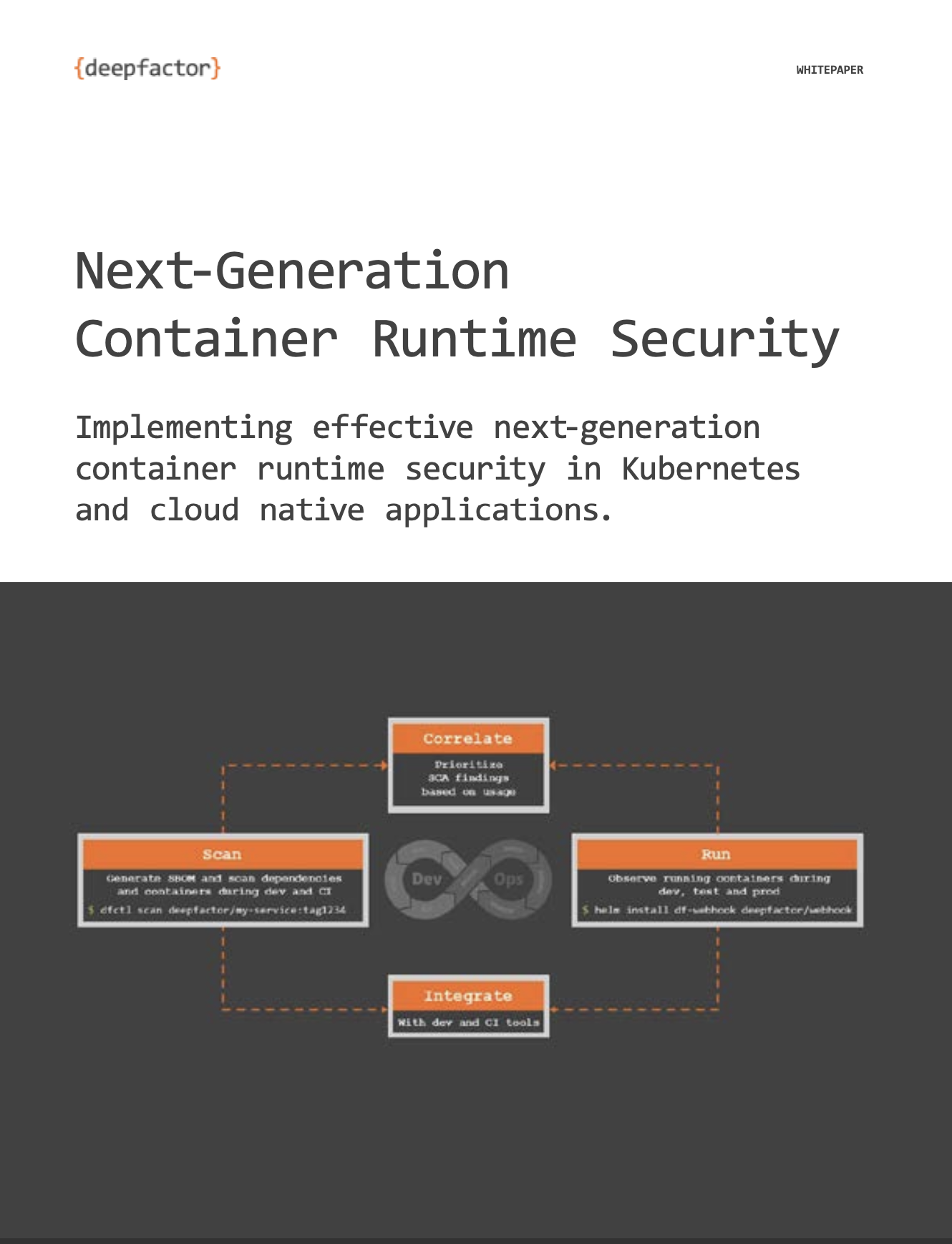

Vikas Wadhvani (38:59):

I think this is the AppSec 2.0 framework that we actually documented in our white paper, and that’s received quite well. And I think it resonates, right? It resonates with the problem AppSec people face. So I think I’m sure you’ll show the QR code, and I would request all of you to scan the QR code, download the white paper, give it a read, and let us know what you feel in the comments.

Kiran Kamity (39:31):

Here is, by the way, the white paper, a link to the white paper. So if you can look at the QR code on the right, that shows you a link to the SCA two-pointer white paper, and if you’d like to check out the deepfactor product to see how it can bring about maturity in your AppSec initiatives, especially around making sense, out of the long list of vulnerabilities that SCA tools generally give out and check out deepfactor’s Next-Gen SCA product.

(40:00)

There’s a free trial available, so that’s the QR code on the right. You can also go to deepfactor.io, our website to learn more.

(40:09)

I also want to call your attention to the next episode that we’re going to have. We’re going to be having a couple of really knowledgeable speakers, Evan from CyberArk as well as Mike, the CTO of deepfactor.

(40:23)

We’re going to be talking next week or in the next episode about secrets, the myriad places where secrets could be hiding and not just code and container images, but also inside your environment variables inside your process memory and so on and so forth. So it’s going to be quite an interesting talk. Check it out. Here is the QR code to sign up for it.

(40:46)

All right, so any questions that you guys have, please post that on the LinkedIn comments or reach out to one of the deepfactor representatives more than happy to talk about it.

(41:01)

There’s a deepfactor LinkedIn page if you’re interested in these sorts of topics, follow the deepfactor LinkedIn page and we’ll keep posting some of the up and coming episodes of the NextGen AppSec series, as well as other interesting things about AppSec.

(41:19)

We also have a deep hack series where every other week we post some interesting questions about multiple choice questions about application security topics and interesting hacks and whatnot.

(41:36)

So when you sign up or when you follow the factor on LinkedIn, you’ll be able to see those types of things as well. Hope you enjoyed this coffee shop style conversation. Next, Gen AppSecSeries episode two about CVSS four. I really want to thank Naman and Vikas our subject matter experts for today. Thank you, Naman. Thank you, Vikas.

Vikas Wadhvani (42:00):

If there’s any feedback, please feel free to post on the comments. If you want us to talk about the particular subject, if you want us to change our style, if you want us to change our T-shirts, if you want us to grab coffee, whatever it is, please feel free to post it on in the comments and we’ll try to update the format as best as we can.

Kiran Kamity (42:23):

Next time. We’ve got to make sure that we get our cup of coffee in the Zoom background. My coffee cup is not showing, but yeah.

Vikas Wadhvani (42:28):

Exactly. I should have got my hibiscus or chamomile tea.

Kiran Kamity (42:36):

Right? Definitely not coffee because it’s midnight for you. All right, yeah. Thank you everyone. Have a good time.