Wednesday, May 15, 5pm ET, Dell Customer Center, 1 Pennsylvania Plaza, 34th Floor, New York, NY

Two sessions:

Session 1: An intro to DTR. Founder & CEO, Adam de Delva will introduce his services and how they develop Platform-as-a-Service (PaaS) for cloud-native applications and security.

Session 2:

Topic: “Vulnerability Reachability Analysis Using OSS Tools.” This 90-minute live, hands-on workshop will be Wednesday, May 15, 2024.

Instructor: Deepfactor CTO & Founder, Mike Larkin. Mike is also a contributor to OpenBSD, working on hypervisors, low-level platform code, and security. Mike is also an adjunct faculty member at San Jose State University, where he teaches application security technologies and virtualization.

Deepfactor is pleased to be sponsoring this gathering. RSVP here. And make sure to also fill in this sign-up sheet so you can get through security at 1 Penn Plaza.

## Abstract:

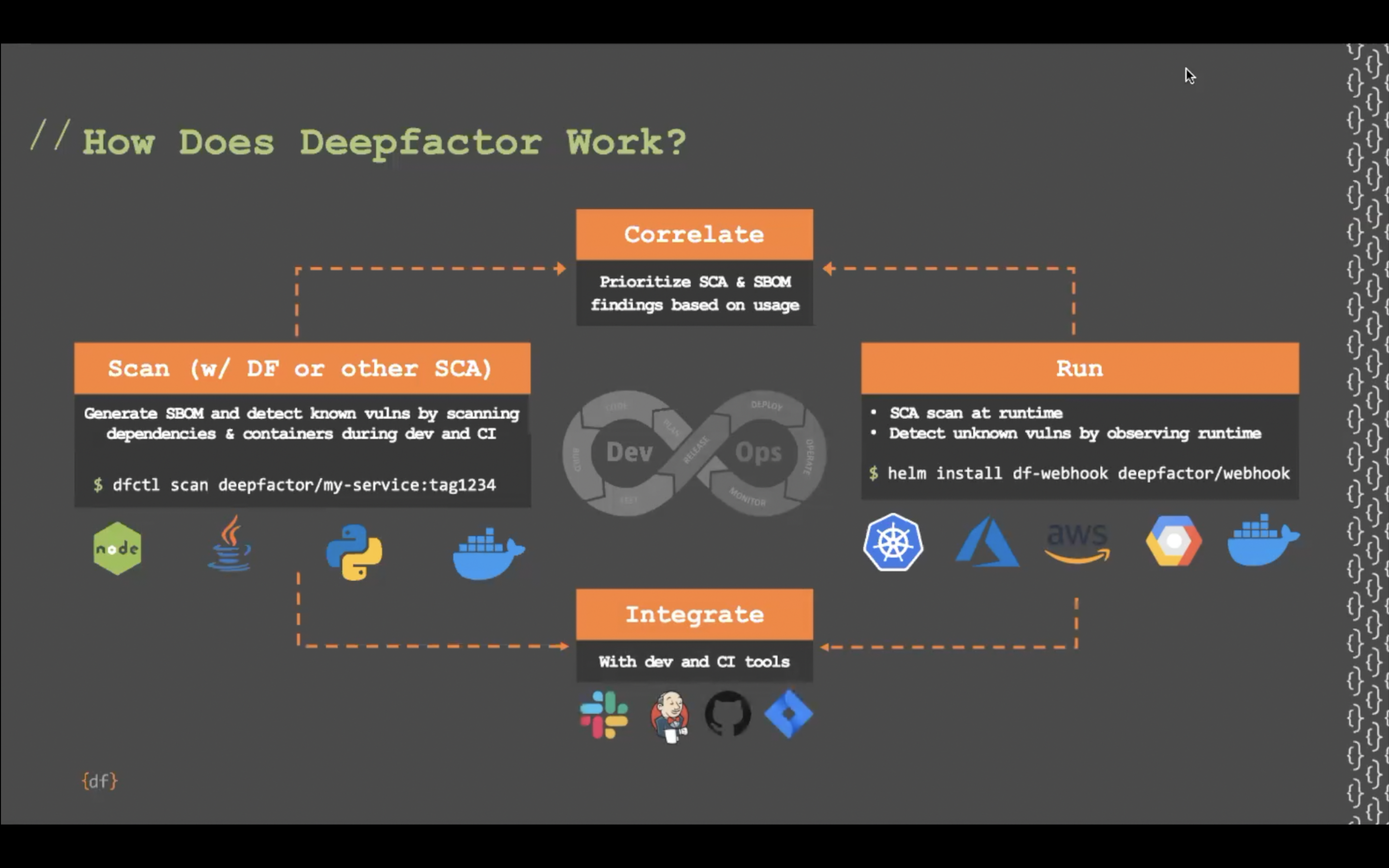

New vulnerabilities are disclosed every day in dependencies that you or your team may be using. But how do you know if you are actually using the vulnerable code? This talk will show you how to use two different types of tools to analyze reachability – deciding if the vulnerability needs to be prioritized based on your own code usage.

## Workshop Overview:

The workshop will be broken into several modules; introductory modules will cover the workshop organization and administrative matters (installing and configuring the tools used in the workshop). Subsequent modules will give an outline of what vulnerability reachability is and why it is important and compare/contrast the two main ways of understanding reachability (static call graphs and runtime analysis).

Next, the workshop will present two short exercises, intended for the attendees to gain hands-on experience using both types of tools against real applications with real vulnerabilities. Interpreted languages (Java) and compiled languages (C/C++/Go) will be covered. Subsequently, the following module will walk through how to interpret the results obtained from the exercises and draw conclusions. The languages chosen are merely representative; the skills learned in the workshop are equally applicable to other languages.

The workshop will conclude with two modules which will present a short overview of commercial tools and a conclusion/wrap-up/Q&A session.

The workshop will conclude with two modules which will present a short overview of commercial tools and a conclusion/wrap-up/Q&A session.

Workshop Outline:

I. Overview (10 minutes)

- A. Workshop organization

- B. About the tools and sample applications

- 1. What are the tools and applications we are going to use?

- C. Obtaining/installing the tools and sample applications

- 1. Cloning from the github repo

- D. Goals of the workshop (what you will learn)

- 1. Be able to understand the importance of vulnerability reachability and how it helps prioritize remediation strategy

- 2. Become familiar with some of the tools available to help with vulnerability reachability

- 3. Learn where you can reach out to for more help in these areas after the completion of the workshop

II. Types of reachability analysis (10 minutes)

- A. Static analysis / call graphs

- 1. What is a call graph?

- 2. What information does a call graph provide to you

- B. Runtime analysis

- C. Language and environment considerations

- 1. Things to consider when choosing a reachability analysis solution

- a. Types of applications being analyzed (COTS vs self-written)

- b. Availability of source code

- c. Robustness of test environment

- 1. Things to consider when choosing a reachability analysis solution

III. Static call graph analysis exercise (20 minutes)

- A. Using static call graph analysis in IntelliJ/Eclipse to analyze a Java application

- B. Using Go callgraph to analyze a Go application

- C. How to correlate a call graph with an SBOM

IV. Dynamic/runtime analysis exercise (20 minutes)

- A. Using a Java agent to analyze runtime reachability in a running Java application

- B. Using valgrind/KCacheGrind to analyze a running C/C++ application

- C. How to correlate runtime analysis with an SBOM

V. Results comparison (10 minutes)

- A. Using the results of each exercise to determine if vulnerable code was used

- 1. How to use the output of each tool to understand what vulnerabilities need to be prioritized

- B. Benefits and limitations of each approach

VI. Conclusion and Q&A (20 minutes)

Outcome/Learnings

- Understand what vulnerability reachability is and why it is important

- Compare/contrast the two main ways of understanding reachability (static call graphs and runtime analysis)

- Via hands-on experience, use both types of tools against real applications with real vulnerabilities. Interpreted languages (Java) and compiled languages (C/C++/Go) will be covered.

- Learn how to interpret the results obtained from the exercises and draw conclusions